We introduce a novel training-free spatial grounding technique for text-to-image generation using Diffusion Transformers (DiT). Spatial grounding with bounding boxes has gained attention for its simplicity and versatility, allowing for enhanced user control in image generation. However, prior training-free approaches often rely on updating the noisy image during the reverse diffusion process via backprop- agation from custom loss functions, which frequently struggle to provide precise control over individual bounding boxes. In this work, we leverage the flexibility of the Transformer architecture, demonstrating that DiT can generate noisy patches corresponding to each bounding box, fully encoding the target object and allowing for fine-grained control over each region. Our approach builds on an intriguing property of DiT, which we refer to as semantic sharing. Due to semantic sharing, when a smaller patch is jointly denoised alongside a generatable-size image, the two become "semantic clones". Each patch is denoised in its own branch of the generation process and then transplanted into the corresponding region of the original noisy image at each timestep, resulting in robust spatial grounding for each bounding box. In our experiments on the HRS and DrawBench benchmarks, we achieve state-of-the-art performance compared to previous training-free spatial grounding approaches.

Based on the flexibility of Transformer architecture and the role of positional embeddings in encoding spatial information within DiT, we introduce joint token denoising. We observe that when two noisy images are denoised together — one as a smaller patch and the other with a generatable-size — they become semantic clones. Leveraging this "semantic sharing" property of DiT, we propose a novel spatial grounding technique which enables precise control over individual bounding boxes.

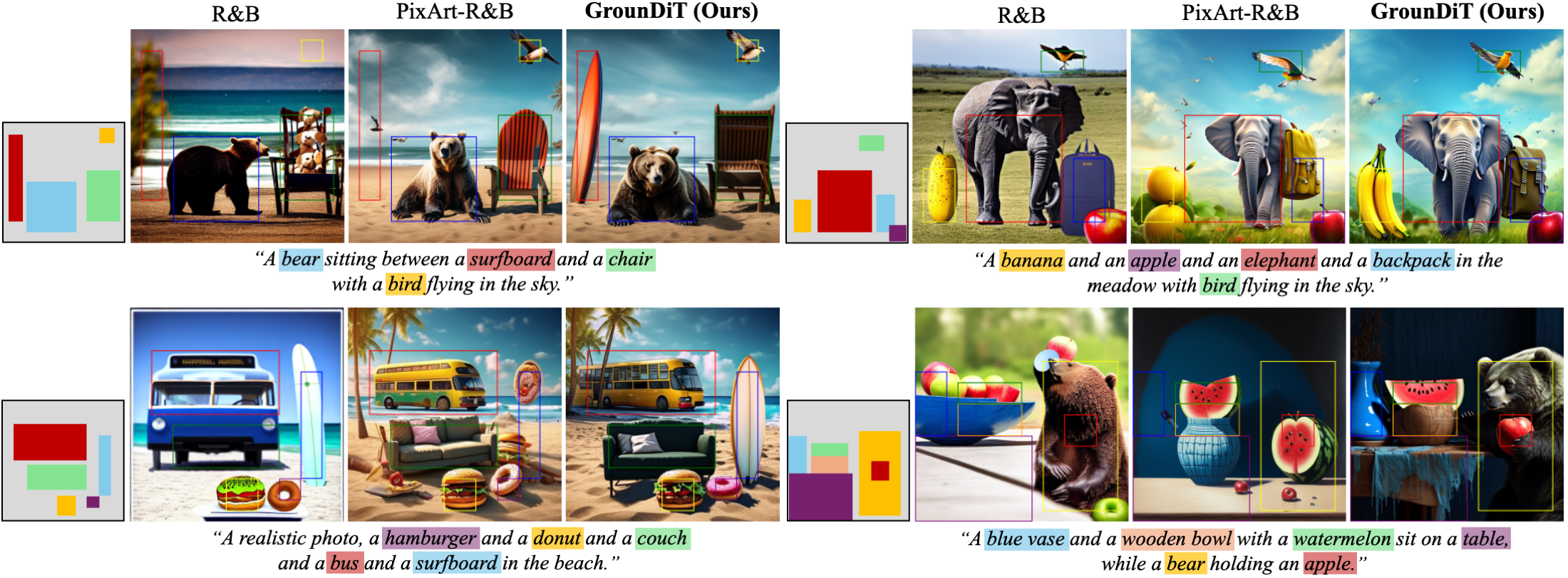

Without additional training, GrounDiT reliably generates each object within the specified bounding box, showing greater robustness compared to previous methods [1].

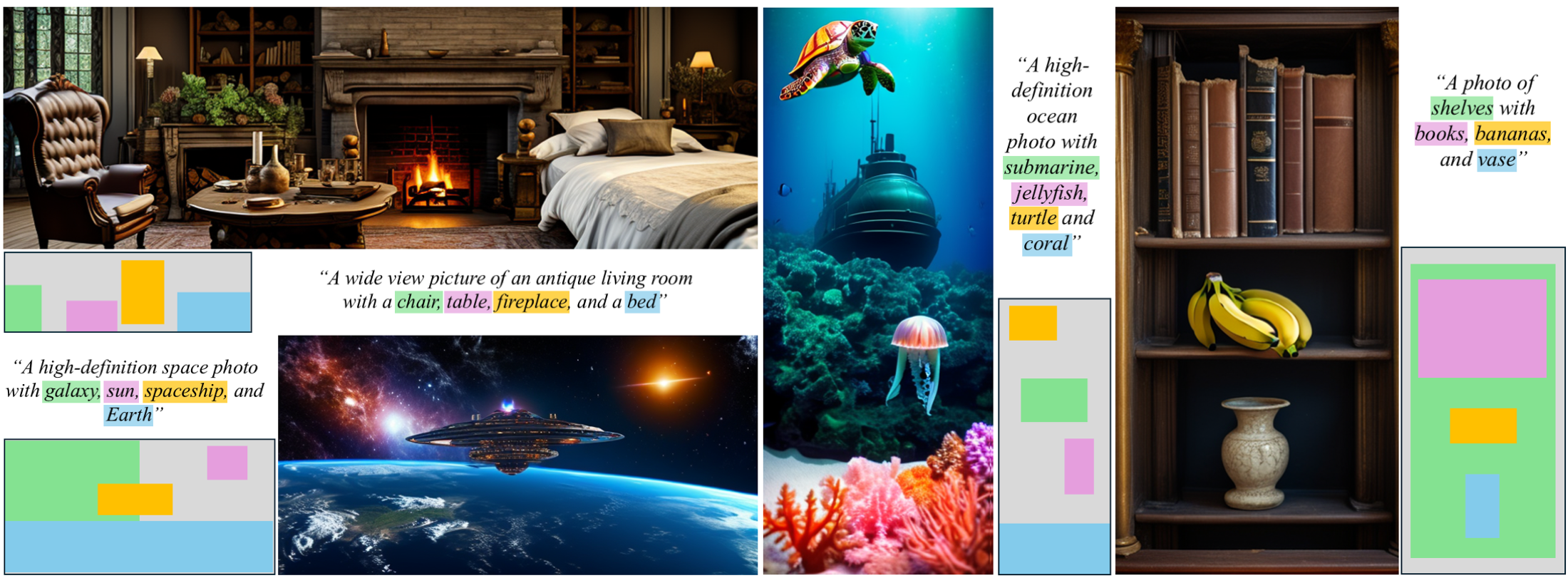

Using PixArt-α [2] as the base DiT model, GrounDiT can further generate spatially grounded images across diverse aspect ratios.

@inproceedings{lee2024groundit,

title = {GrounDiT: Grounding Diffusion Transformers via Noisy Patch Transplantation},

author = {Lee, Phillip Y. and Yoon, Taehoon and Sung, Minhyuk},

booktitle = {Advances in Neural Information Processing Systems},

year = {2024}

}

We thank Juil Koo and Jaihoon Kim for valuable discussions on Diffusion Transformers. This work was supported by the NRF grant (RS-2023-00209723), IITP grants (RS-2019-II190075, RS-2022-II220594, RS-2023-00227592, RS-2024-00399817), and KEIT grant (RS-2024-00423625), all funded by the Korean government (MSIT and MOTIE), as well as grants from the DRB-KAIST SketchTheFuture Research Center, NAVER-Intel Co-Lab, Hyundai NGV, KT, and Samsung Electronics.

[1] R&B: Region and Boundary Aware Zero-shot Grounded Text-to-image Generation, Xiao et al., ICLR 2024

[2] PIXART-α: Fast Training of Diffusion Transformer for Photorealistic Text-to-Image Synthesis, Chen et al., ICLR 2024